From the Burrow

TaleSpire Dev Log 257

Hi folks, the last week has been one where giving updates would have been challenging as most days have consisted of me grumbling at a whiteboard, but let’s do it anyway :p

At least half the week was spent working out how I wanted to store board data on the backend. We knew we didn’t want sharing boards to result in everyone having a duplicate copy of the board on the backend as this would mean 1000 people using a shared board would result in 1000x the storage requirement. Obviously, that means we want different people’s boards to refer to the same data. However, almost all play sessions involve small changes to the board (for example, if a fireball destroys a wall), and we don’t want that first change to result in duplicating the whole board, so we want to be able to store only what has changed.

This has been long-planned under the title ‘per-zone sync’, the implementation details are a little trickier as we start looking at worst-case numbers, however. A board (today) is 2048x1024x2048 units in size, and we divide that into 16x16x16 unit zones. That means the worst-case number of zones is 128x64x128=1048576 per board. Not only is that a lot, but each zone isn’t (usually) much data, and we definitely don’t want the book-keeping to be larger than the zone data. Furthermore, TaleSpire needs to be told what to download, so more separate files mean more things the backend has to tell the frontend. Let’s do something simple to help this; let’s serialize zones together. We will pack zones in 2x2x2 zone chunks (which will later be called sub-sectors), meaning the worst case is now 64x32x64=131072. Not great, but better.[0]

However, these chunks are still reasonably small. They are great for localized changes, but it’s overkill for the board regions that aren’t changing frequently. So let’s make an alternate chunk size of 8x8x8 zones. We can then choose between these larger ‘sectors’ and the smaller ‘sub-sectors’, which gives us options when dividing up the world[1].

So we have 4x4x4=64 sub-sectors to a sector, but quite a few more sectors to the board. If we follow the same 4x4x4 pattern of sub-sectors to sectors, we get 4x2x4=32 regions of 32x32x32 zones per board. Having a consistent branching factor (64) is nice, and 32 regions will be handy in a couple of paragraphs time.

Now we have something that can work as a sparse hierarchy of spatial data, let’s look at copies again. Rather than tracking ancestry at the board level, We can have each sector point to the sector it is descended from. This lets us easily walk backward and find all the files contributing to your version of that sector.

One concern arising from recursively walking the sector data is query time. We directly address rows, so the main factors to query speed (I think) will be table size and index kind. Focusing on size, we can actually do something to help here. The first thought is to use table partitioning, but I didn’t like it for two reasons:

- It’s built on table inheritance, and that doesn’t seem to maintain the reference constraints in the child tables

- It looks tricky to ensure we keep sectors from the same region in the same partition

However, we previously mentioned those 32 regions of the board. What if we treated those regions as our partitions, made 32 tables, and handled it manually. Apart from it being a lot of work, it seems promising. However, most boards aren’t going to use every region, so we’d still get clustering around the origin. Unless, of course, we introduce some factor to more uniformly spread the data across the partitions. The way I’m looking at doing it is taking the region-id, adding the low bits from the board-id of the oldest board ancestor, and then modulo by 32. This spreads the data across partitions and means that regions of copied boards live in the same partition as regions of the boards they were copied from[2].

With the DB side starting to make sense, I had hoped to find a way to trivially dedupe the uploaded data itself in S3. The original idea was to name the uploaded chunks by their hash. That way, two identical chunks will get the same name and thus overwrite each other. The problem is that you can get hash collisions, but these can be minimized by hashing the data with one hash[3], prepending that to the data, and then hashing with a second hash. S3 will validate an upload with md5 if you request it to, so that seemed a logical choice.

However, a huge issue is that we have only made it hard to accidentally get a hash collision; it doesn’t stop people from being malicious and trying to break other peoples’ boards. There is no great answer to this. The only thing to do is upload with a unique name and then have a server-side process validate the file contents before allowing it to be de-duplicated. I’ll be adding this after Early Access has shipped.

Speaking of md5, now that compression is jobified, the md5 calculation is one of the only parts of saving a board that we don’t have in job yet. To remedy this, I’m looking at porting the md5 implementation from https://github.com/kazuho/picohash to Burst. This looks straightforward enough and will be a nice change from the planning of the last week.

While planning, I’ve been weighing up different potential implementations, and this has required reading into a bunch of SQL and Erlang topics. One neat diversion I went on was looking at binary serializing for our websocket messages to the server. Previously I had looked at things like protobufs and captnproto. However, I had ignored the fact that Erlang has ETS. ETS is a simple binary format which it uses when communicating between nodes. Some of the message kinds only make sense within Erlang, but one possible subset was identified for external use called BERT.

I played around with BERT, but I didn’t like how floats were encoded. ETS has a newer, nicer way of encoding them, so I took some inspiration from BERT and started writing a simple Burst compatible way to write ETS messages from C#. I was quickly able to encode maps, tuples, floats, and strings, and sending them as binary messages to the server was also trivial.[4] The next step would be decoding and updating the backend’s code generator to use this. It should result in faster encoding/decoding with no garbage and smaller messages over the wire. Still, I’m going to leave this until after the Early Access release to avoid risking delaying the release even further.

I think that is the lot for this last week! I’m hoping to start making progress more quickly now that the plan has taken shape. I’ve already started writing the SQL and aim to start the erlang and c# portions as soon as possible.

Hope you are all well, Peace.

[0] Of course, we can also add limits of how many zones can be in each board, and we’ll almost certainly do that.

[1] This hierarchical spatial subdivision is probably making some of you think of octrees. Me too :P however, this approach will give us much shallower trees, which will be good for limiting the depth of the recursive SQL queries to get the data.

[2] Naturally, this still means that popular boards will introduce a lot of extra rows to the partition in an unbalanced way. Still, each popular board will be uniformly spread across the partitions too, so it all should even itself out.

[3] I’m looking at xxhash3 as it’s super fast and has a Burst compatible implementation already made by Unity

[4] Given that we are now not using BERTS, we could go another step further and make our own binary format with only what we need. This is definitely an option, but moving to ETS first is a decent step that means we don’t have to write an encoder/decoder for the erlang side. We can swap out the implementation once we have moved to binary messaging and ironed out the kinks.

TaleSpire Dev Log 256

Heya all!

Today and tomorrow are somewhat taken up by what I’m hoping is the last of the moving business. However, I’ve had some time this evening to start digging into the per-zone sync.

I started off working out what we would need in a download manager. As I was doing this, I got distracted thinking about the compression and decompression of zones. Currently, we use GZipStream, which has been fine, but it would be way better if it played nicer with Unity’s job system and if it could work without creating garbage. I also love that AsyncRedManager.Read gives a job handle as that would allow us to chain the load and decompress if we could work out how to decompress from a job.

I knew that Burst compiled jobs can call native code, so I searched for a simple zlib implementation written in C. I found a library called miniz, which looked ideal if you use the low-level functions as it doesn’t allocate any memory when de/compressing. I then found a .Net wrapper called net-miniz, and after a read of its code, I forked it so we could make a version that works with Unity’s job system.

It was pretty easy work, and we now have exactly what we wanted. I’ve not settled on an API yet, but I’ll publish the fork when I’ve worked that out and tested it a bit.

So it’s been a very successful evening. A mention I have more work to do at the old flat tomorrow, but I expect I’ll get some time to start looking into database changes we’ll need for per-zone-sync and eventual support for sharing boards :)

Ciao

TaleSpire Dev Log

Heya folks,

Lots of coding has been going on behind the scenes as usual. A lot of that work has been centered around the picker.

In the STAGING buffer technique we were using, I knew that we were meant to pull the results on the following frame. However, I had been cheeky and tried to pull it on the Unity CommandBuffer event AfterEverything. This didn’t work well, and I saw significant delays on the render thread; it makes sense and felt like something I would have to change.

We added support for pixel picking to the dice and made it play nice with hide volumes (so you can’t pick things that should be invisible). While coding that I noticed that hide volumes were not applied correctly to pasted tiles or tiles created by redoing placing tiles. I chased this down to a simple case where we were not setting up a tiles state before trying to set apply the hide volumes to that state.

On the subject of picking: After a little experimentation, we switched from using the Depth buffer from the currently rendering scene to giving the pixel picker it’s own depth buffer. This way, we could easily exclude things we didn’t want to be pickable. We decided to try this after noticing the tile/prop/creature preview would be detected by the picker when placing them, as that would have been a bit annoying to work around.

The next task was simple but takes a little context to explain. The picker and physics raycasting code returns a reference to tiles/props, which is basically just a fancy pointer[1]. Tile & Prop data can be moved when a zone is modified so, if you want to store a reference to a tile/prop that is valid for multiple frames, we make a handle that has a safe way to lookup an asset at the expense of some performance. Previously you would create a new handle whenever you wanted to hold on to a tile/prop, but allocating this object means that the GC has to clean it up at some point[2]. To mitigate this, we turned the handle into a sort of container. You create it once and then push a tile/prop reference into it. You can then replace the reference in the handle or clear it.

Then there were a bunch of physics-related tasks:

- A couple of bugs in our wrapper around

Unity.Physicsresulted in ray-casts hitting things that had already been destroyed. - Replace the mesh colliders around the creature base with a single CylinderCollider

- Dice has not been set up to interact with creatures

- Improving handling of framerate spikes

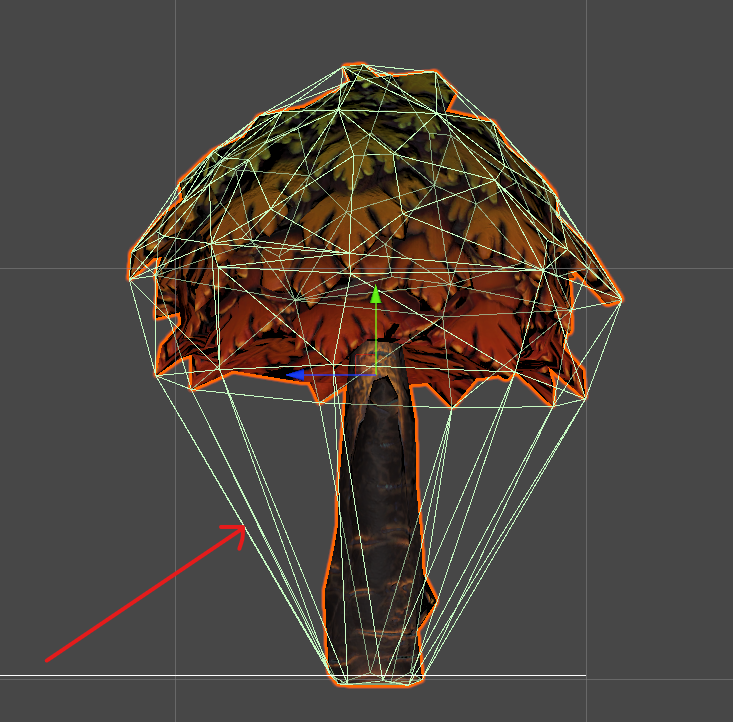

- My code for creating the cylinder geometry was off by 90degrees, so we ended up with stuff like this happening

Note: It is super obvious what is happening in this picture, but when I noticed it, the only cylinder collider was around the creature’s base, and so I thought I still was messing up creature/dice interactions. I had a good solid facepalm when I finally saw what was up.

The following item on the bug list was that shadows were not respecting hide volumes. This was harder than it should have been due to hiccups we’d had while rewriting the engine. Let’s get through the basics:

- GameObjects were too slow

- Unity has calls to render objects using instancing without using GameObjects

- Those calls cause issues with shadows as Unity can’t them per-light and so does way too much work

- Unity added a new thing called a BatchRendererGroup, which lets you make big batches of things to render and implement the culling yourself (Yay!)

- We implemented our new code based on the BatchRendererGroup

- What wasn’t documented was that specifying per-instance data only works if you are using their new Scriptable-Rendering-Pipeline (ARGGGHH)

- We do not have the time to write our own rendering-pipeline and port all the shaders

- We decided to implement our own GPU culling and use indirect rendering to draw the board with shadowcasting turned off and keep using the BatchRendererGroup for shadows.[3]

This has worked well, but as we can’t get per-instance state to the shadow-shaders, we can’t use our usual approach to cull things in hide volumes. Instead, we now check the hide volume state when doing the CPU-side culling of shadows. This would require too many pointer lookups, so we added an extra job to copy the data into another buffer for fast lookup.

Dice ‘sleep’ was next on the agenda. When an object has stopped moving in most physics engines, the engine puts the object to sleep and stops simulating it. The new Unity.Physics library does not have support for sleep built-in as the library is intended as something to be built upon, and different sleep implementations have various tradeoffs. Instead, what we are doing is simply snapping the die to a stop when it’s velocity and position has stayed at a low value for a given period of time. This stops the dice from drifting but doesn’t stop the physics simulation. To prevent things from (computationally) getting out of hand, we are adding an auto-despawn of dice rolls once they have been at rest for a given duration. This also starts addressing some feature-requests about dice management, so that’s nice.

One thing that is not resolved is that we are seeing inconsistent execution time for some operations related to picking. It’s a bit confusing as it’s the enqueuing of the task that is taking a long time rather than the task itself. I need to collect some more data and post a question on the Unity forums as I’m a bit lost as to why this is happening.

Amongst all this is the usual slew of small issues and fixes, but this is getting plenty long enough already.

I’m immediately switching tasks from front-end work to the per-zone sync that I’ve been talking about for months. The next updates will be all about that.

Until then :)

[1] We implement them as readonly ref structs, so we don’t accidentally store them [2] GC really is the enemy in games. You might be surprised how badly timed it hurts performance even when using an incremental GC. [3] Another approach would have been to write our own lighting/shadow system, but that’s also terrifying given how late we already are with the Early Access :/

TaleSpire Dev Log 254

Helloooo!

It’s been an intense few days of coding, and I’m back to ramble about it.

The issue that arose was the behavior of ‘picking’. Picking is the act of selecting something in the 3D space of the board. The current implementation of this uses the raycasting functions of the physics library. For this to work well, you need the collision mesh to match the object as well as possible. However, in general, the more detailed it is, the more work the CPU needs to do to check for collision [0].

Here is an example where we are striking a balance between those two requirements:

Most of the time, it works just fine; however, it makes the game feel unresponsive when it doesn’t. Worse, it could take you out of a critical moment in the story, and that would be a real failure on our part. Here is an example of where this can go wrong:

In this case, the bear is selectable just fine near the paws, but higher up, the tree’s simplistic collider is blocking the selection.

What would be ideal is to pick the creature based on the pixel your cursor is over. But how to do that?

The basic approach is very simple. Render everything again to an off-screen buffer, but instead of the typical textures, you give each object a different color based on their ID. Then you can read the color at the pixel of the screen you are interested in, and whatever color is there is the ID of the thing you are picking. Sounds neat, right? But I’m sure you’re already wondering if this might be very costly, and the answer would be yes!

We can do a lot to make it less costly though. We know we only need to consider assets in zones[1] that are under the cursor. Next, what if we have a cheap way of checking if they might be the asset under the cursor? In that case, we could greatly reduce the amount to draw.

Indeed we can do both those things. For the first, it’s done pretty much as you would expect; check if the ray from the cursor intersects each zone. For the second, our culling is done on the GPU, so we add some code to our compute shader to also check if the AABB (Axis aligned bounding box) is intersected by the ray.

That’s not bad, but we still need to render the whole of each asset. That’s a lot of pixels to draw, given that we only care about one. So we use a feature called the ‘scissor rect’ to specify which portion of the screen we want to draw to[2]

Again this is good, but it would be good to limit the number of those fragments that remain that we need to run the fragment shader for. To do this, we simply render the assets for picking after the buffer has been populated and use the screen’s depth buffer. As long as we keep our fragment shader simple, we can get early z-testing[3] which does precisely what we want.

So now we’ve limited the zones involved, the assets from the zones involved, the triangles in the assets involved, and the fragments involved. This is probably enough for the rendering portion of this technique. Now we need to get the results back.

And getting the data back, as it turns out, is where this gets tricky. Ideally, we want it on the next frame; however, we also need it without delaying the main thread, which is hard as reading back means synchronizing CPU and GPU.

To start, I simply looked at reading back the data from the portion of the texture we cared about. But Unity’s GetPixels doesn’t work with the float format I used for the texture. So after some faffing around, I added a tiny compute shader to copy the data out to a ComputeBuffer. It was immediately evident that using GetData on the ComputeBuffer caused an unacceptable delay, between 0.5 and 0.6ms in my initial tests. That is a long time to be blocking the main thread, so instead, I tested RequestAsyncReadback, it does avoid blocking the main thread, but it does so by delivering the result a few frames later. This could work, but it’s a shame to have that latency.

After a bit more googling, I learned about the D3D11_USAGE_STAGING flag and how we could use it to allow us to pull data on the following frame without blocking the main-thread. Soon after I stumbled on this comment.

MJP is an excellent engineer who’s blog posts have helped me to no end, so I was excited to see there might be an avenue here. There was only one sticking point, Unity doesn’t expose D3D11_USAGE_STAGING in it’s compute buffers. This meant I needed to break out c++ and learn to write native plugins for Unity.

Thanks to the examples, I was able to get the basics written, but something I was doing was crashing Unity A LOT. For the next < insert embarrassing number of hours here > I struggled with this that mapping the buffer would freeze or crash Unity unless done via a plugin event. In my defense, this wasn’t done in the example when writing to textures or vertex buffers, but I’m a noob at D3D, so no doubt I’m missing something.

Regardless, after all the poking about, we finally get this:

![]()

The numbers on the left-hand side are the IDs of the assets being hovered over.

I don’t have the readback timing for the final version; however, when the prototype wasn’t crashing, the readback time was 0.008ms. Which is plenty good enough for us :)

I’ve still got some experiments and cleanup to do, but then we can start hooking this up to any system that can benefit from it[4]

I hope this finds you well.

Peace.

[0] In fact, using multiple collider primitives (box, sphere, capsule, etc) together is much faster than using a mesh, but the assets we are talking about today are the ones that can’t be easily approximated that way.

[1] In TaleSpire, the world is chunked up into 16x16x16 unit regions called zones

[2] IIRC it also affects clearing the texture, which is handy.

[3] I used the GL docs as I think they are clearer, but this is also true for DirectX.

[4] Especially for creatures. We only used the high-poly collider meshes there as accurate picking was so important.

TaleSpire Dev Log 253

Evening all.

It’s been a reasonably productive day for me today:

- I fixed two bugs in the light layout code

- Fixed pasting of slabs from the beta [0]

- Fixed a bug in the shader setup code

- Enabled use of the low poly meshes in line-of-sight (LoS) and fog-of-war (FoW)

- Started writing the server-side portion of the text chat

I have not made any UI for chat in TaleSpire yet. My focus has been on being able to send messages to different groups of players. Currently supported are:

- all players in the campaign

- all players in the board

- specific player/s

- all gms

Next in chat, I need to look at attachments. These will let you send some information along with the text. Currently, planned attachment kinds are:

- a specific position in the board

- a particular creature in the board

- a dice roll

We will be expanding these, but they feel like a nice place to start and should help with the flow of play in play sessions.

We’ll show you more of the UI as it happens.

One cute thing I added for debugging is the ability to render using the low-poly occluder meshes instead of the high-poly ones. Here is an example of that in action. You can see that, in this example, the decimator has removed too much detail from the tiles. This is handy for debugging issues in FoW and LoS.

Alright, that’s all for today,

Seeya

[0] The new format is a little different, and I’ll publish it a bit closer to shipping this branch

TaleSpire Dev Log 252

Heya folks! Yesterday I got back from working over at Ree’s place, which was, as always, super productive.

Between us, we have:

- props merged in

- copy/paste working correctly with combinations of props/tiles together

- Fix parts of the UI to configure keybindings

- More work on the internationalization integration

- Progress on the tool hint UI and video tutorial system

- Updated all shaders to play nice with the new batching system

- Fixed some bugs in the math behind animations and layout of static colliders

- Rewritten the cut-volume rendering

- Fixed a bug that was stopping us spin up staging servers

- Generated low-poly meshes for use in shadows, line-of-sight, and fog-of-war

That last one is especially fun. Ree did a lot of excellent work in TaleWeaver hooking up Unity’s LOD mesh generator (IIRC, it was AutoLod). The goal is to allow creators to generate or provide the low poly mesh used for occlusion. This allows TaleSpire to reduce the number of polygons processed when updating line-of-sight, fog-of-war, or when rendering shadows.

Most of the above still need work, but they are in a good place. For example, the cut shader has a visual glitch where the cut region’s position lags behind the tile position during camera movement. However, that shouldn’t be too hard to wrangle into working.

Today I moved the rest of my dev setup to the new house. Lady luck decided it was going to well and put a crack in my windscreen, so I’m gonna have to get that replaced asap.

The next bug for me to look at is probably one resulting in lights being positioned incorrectly.

Anyhoo that’s the lot for tonight.

Seeya!

TaleSpire Dev Log 251

Heya peoples! Today was my first day back working after the Christmas break, and my goal was to add prop support for copy/paste.

Previously I was lazy when implementing copy/paste and stored the bounding box for every tile. This data was readily available in the board’s data, so copying it out was trivial, as was writing it back on paste. However, this is no good when artists need to change an existing asset (for example, to fix a mistake). Fixing this means looking up the dimensions and rotating them on paste, but that is perfectly reasonable.

Looking at this issue reminded me that we have the exact same problem with boards. As we are looking at this, we should probably fix this for board serialization too. This does make saving aboard much more CPU intensive, however. The beauty of the old approach was that we could just blit large chunks of data into the data to save; now, we have to transform some data on save. We will almost certainly need to jobify the serialize code soon[1].

The good news in both the board and slab formats, we will be removing 12 bytes of data per tile[0]. In fact, as we have to transform data when serializing the board, why not make the positions zone-local too. That means we can change the position from a float3 to a short3 and save an additional 6 bytes per tile[2]

A chunk of today was spent umm’ing and ah’ing over the above details and different options. I then got stuck into updating the board serialize code. Tomorrow will be a late start as I have an engineering installing internet at my new apartment at the beginning of the day. After that, I hope to get cracking on the rest of this.

Back soon with more updates.

Ciao

[0] sizeof(float3) => 12

[1] Or perhaps burst compile it and run it from the main thread.

[2] technically we only really need ceil(log((zoneSize * positionResolution), 2)) => ceil(log(16 * 100, 2)) => 11 bits for each position component, which would mean 33 bits instead of 48 for the position. However short3 is easier to work with so will be fine for now.

TaleSpire Dev Log 250

Heya folks, I’ve got a beer in hand, and the kitchen is full of the smell of pinnekjøtt, so now feels like an excellent time to write up work from the days before Christmas.

This last week my focus has been on moving (still) and props.

As mentioned before, I’ve been slowly moving things to my new place, but things like the sofa and bed wouldn’t fit in our little car, so the 18th was the day to move those. I rented a van, and we hoofed all the big stuff to the new place. The old flat is still where I’m coding from as we don’t have our own internet connection at the new place yet, so I’m traveling back and forth a bunch.

Anyhoo you are here for news on the game, and the props stuff has been going well. Ree merged all his experiments onto the main dev branch, and I’ve been hooking it into the board format. The experiments showed that the only big change to the per-placeable[0] data is how we handle rotation. For tiles, we only needed four points of rotation, but props need 24. We tested free rotation, but it didn’t feel as good a rotating in 15-degree steps.

The good news is that we had been using a byte for the rotation even before, so we had plenty of room for the new approach. We use 5 bits for rotation and have the other 3 bits available for flags.

I also wanted to store whether the placeable was a tile or prop in the board data as we need this when batching. Looking up the asset each time seemed wasteful. We don’t need this per tile, so we added it to the layouts. We again use part of a byte field and leave the remaining bits for flags. [1]

There are a few cases we need to care of:

- Tiles and Props having different origins

- Pasting slabs that contain placeables which the user does not have

- Changes to the size of the tiles or props

Let’s take these in order.

1 - Tiles and Props having different origins

All tiles use the bottom left corner as their origin. Props use their center of rotation. The board representation stores the AABB for the placeable, and so, when batching, we need to transform Tiles and Props differently.

2 - Pasting slabs that contain placeables which the user does not have

This is more likely to happen in the future when modding is prevalent, but we want to be able to handle this case somewhat gracefully. As the AABB depends on the kind of placeable, we need to fixup the AABBs once we have the correct asset pack. We do this in a job on the load of the board.

3 - Changes to the size of the tiles or props

This is a similar problem to #2. We need to handle changes to the tiles/props and do something reasonable when loading the boards. This one is something I’m still musing over.

Progress

With a couple of days of work, I started being able to place props and batch them correctly.

I spotted a bug in the colliders of some static placeables. I tracked down a mistake to TaleWeaver.

With static props looking like they were going in a great direction, I moved over to set up all doors, chests, hatches, etc., with the new Spaghet scripts. This took a while as the TaleWeaver script editor is in a shockingly buggy state right now. However, I was able to get them fixed up and back into TaleSpire. I saw that some of the items have some layout issues, so I guess I have more cases miscalculating the orientation. I’ll look at that after Christmas.

In all, this is going very well. It was a real joy to see how quickly all of Ree’s prop work could be hooked up to the existing system.

After the break, I will start by getting copy/paste to work with props, and then hopefully, we’ll just need to clear up some bugs and UI before it’s ready to be tested.

Hope you all have a lovely break.

God Jul, Merry Christmas, and peace to the lot of ya!

[0] A ‘placeable’ is a tile or a prop [1] I’m going to review this later as it may be that we need to look up the placeable’s data during batching anyway and so storing this doesn’t speed up anything.

TaleSpire Dev Log 249

Good-evening folks,

I’m happy to report that the new lighting system for tiles and props is working! We now have finally moved away from GameObjects for the board representation.

As it looks identical to the current lights, I’m not posting a clip, but I’m still happy that that bit is done[0]. In time, we will want to revisit this code as there is still more room for performance improvements. However, there are bigger fish to fry for now.

After finishing that, I squashed a simple bug in the physics API where I was normalizing a zero-length vector (woops :P).

Next, I’ll probably be looking at the data representation for props. I will be a bit distracted though, as I’m moving house and in the next few days, I need to get a lot done.

I think that’s all I have to report for today.

Seeya around :)

[0] Technically I still haven’t worked out Unity’s approach to tell if a camera is inside the volume of a spot light. This means my implementation is a little incorrect, but it’s not going to be an issue for a while.

TaleSpire Dev Log 248

Today I’ve been working on the new light system.

The basics of this are that I am porting our previous experiments allowing lights without gameobjects to our new branch and hooking it into the batching code. There are, of course, lots of details to work out when trying to make something shippable.

As usual, we need to think a little about performance. There are often many lights on screen, and each light in Unity takes a one draw call (when not casting shadows, which these don’t). Each frame, we need to write each light into a CommandBuffer[0]. With Unity’s approach to deferred lights, A light may be rendered with one of two shaders based on where the camera is inside the light’s volume or not. Two matrices need to be provided, and the light color seems to need to be gamma corrected[1]. As CommandBuffers can only be updated on the main thread, I want to do as little of the calculation there as possible. Instead, we will calculate these values in jobs we have handling batching, and then the only work on the main thread is to read these arrays and make the calls to the CommandBuffer. This fits well as the batching jobs already calculate the lights’ positions and have access to all the data needed to do the rest.

If all goes well, I hope to see some lights working tomorrow.

Seeya then.

[0] we need to use a command buffer as the light mesh has to be rendered at a specific point in the pipeline, specifically CameraEvent.AfterLighting

[1] This one was kind of interesting. When setting the intensity parameter of the light, the color passed to the shader by Unity changed. In my test, the original color was white, so the uploaded values were float4(1, 1, 1, 1), where the w component was the intensity. However, when I changed the intensity to 1.234, the value was float4(1.588157, 1.588157, 1.588157, 1.234). It’s not uncommon for color values to be premultiplied before upload (see premultiplied alpha), so I just made a guess that it might be gamma correction. To do this, we raise to the power of 2.2. One (white) raised to the power of anything will be 1. So instead, we try multiplying by the intensity and raising that to the power of 2.2. Doing that gives us 1.58815670083..etc bingo!