From the Burrow

Late again

I have traveled to see family this week so sorry for the late writeup.

This week went pretty well. I went through the PBR example I had been using line by line making notes and then tried to match my implementation to theirs.

I had made some progress but still the colors looked washed out, something must have been putting the color values way out of wack. Finally I saw it, I had been adding a color I should have been multiplying. A facepalm and a tweak later color started looking like this

I’m still not convinced that the example’s approach for creating the final color is correct, however this is plenty good enough for now.

With that done I revisited my UI code (which uses nuklear) and found that it was broken…shits.

A bunch of git archaeology told me the issue was related to my GL context caching. Double shits

..and then that the crash occurred because I had made CEPL’s handling of the context more robust. At this point a drink was in order, but after that, fixing!

The result was that I hadn’t been unbinding VAOs correctly when rendering and so then subsequent buffer unbindings had been captured in the VAO. This sucks but was a 1 line fix and CEPL is much better for it.

Theres other stuff going on buts they are not worth yakking about right now.

Seeya

Grind

As I was so late writing last week I don’t have a lot that’s new, but I need to keep the rhythm of writing these.

I have been focused on getting my maths library up to scratch. I did a bunch of work on getting the non-consing api cleaned up and merged. This involved fixing a whole host of optimization notes from the compiler. The compiler I use is pretty good at explaining where it is forced to ‘waste’ speed and why. The biggest single change was changing the return type of the vector length & distance functions to show that it could only return positive floats. That was important as the square root of a negative number is a complex number, so by indicating the functions could only be greater than 0 the compiler could ignore the complex number cases.

I also found a branch where I had started adding support for lines, rays, line-segments & axis aligned bounding boxes in ℝ3. I fixed up the line-segments & AABBs and got that merged too.

Finally I started the non-consing (destructive) version of the matrix apis. This is also on master.

Tonight I want to test the non-consing api against the consing version to make sure I haven’t introduced any bugs. I’ll then redefine the consing version using the non-consing functions to do the work. At that point I’m probably ready to stop scratching this itch and focus on graphics stuff again.

Peace

Boring Necessities

This week (and a bit) has felt like a bit of a blur as I’ve mainly been working on boring stuff that just needed doing. I’ll be honest, I can’t remember what I did so I’ll just go spelunking through my commits and see what I find.

lisp-games-cross-test

I started yet another project :p. This came out of me feeling the uncertainty you get when you have written a good chunk of that stack you use (and don’t have enough tests). It’s stupid to have written this thing which is meant to be nice for creating things and then be stymied by and lingering sense that something, somewhere must be breaking.

One thing I really wanted to be sure about was that my maths library rtg-math was solid.

As I didn’t want to rely on my own (very limited) knowledge I decided to make a basic fuzz testing setup where I compare my library against other math libraries written in Common Lisp.

I built on top of the excellent fiveam testing library and made a fairly nice system for defining comparative tests across several libraries. It takes into account that a library’s features will not exactly overlap with another. I’m happy with it so far but it’s not ready for github yet.

Anyhoo I set it to work and found that a lot of stuff is working just fine. One function (the matrix to quaternion function) was broken. At first I stupidly blamed the c++ I based the function on until, after many re-reads, I noticed that the [] operator had been overloaded to index from x and ignore the w component altogether. This meant I had a bunch of ‘off by one’ indexing errors in that function :facepalm:.

However I did find a typo in the book’s code which has now been fixed! So that’s a nice side effect of this work.

I then got massively mistake about the api of another library I was comparing to (sorry axion). The process of being gently schooled by psilord on the #lispgames irc was incredibly helpful but did send me into frequent ‘What if my entire api is wrong’ panics.

Luckily he provided me with some excellent links and I’m slowly getting a better grasp of the terminology.

Luckily, as my library is heavily based on the c++ code from the text book I learned from, my library is fine and then only problems were (and to some extent remain) with my brain :D

RTG-Math

Whilst working on the above I also got a friendly jab that my vector math was too slow. It turns out the ‘jab-er’ in question was comparing his destructive (mutable) api with my non-destructive one. This is kind of understandable as I didn’t have a destructive api to compare against.. but of course I fixed that >:)

Below is the (very basic) test I did to check how it performs:

;; First we make a list of 1000 random vector3s

;; ↓↓

RTG-MATH> (let ((to-add (loop :for i :below 1000 :collect

(v! (- (random 2f0) 1f0)

(- (random 2f0) 1f0)

(- (random 2f0) 1f0))))

(res (v! 0 0 0)))

;;

;; Here we make the list circular

;; ↓↓

(setf (cdr (last to-add)) to-add)

;;

;; Then we iterate 100 million times adding the elements of

;; the circular list into the variable 'res'

;; ↓↓

(time

(loop :for i :below 100000000 :for e :in to-add :do

(v3-n:+ res e)))

res)

Evaluation took:

0.748 seconds of real time

0.748000 seconds of total run time (0.748000 user, 0.000000 system)

100.00% CPU

2,297,194,923 processor cycles

0 bytes consed

#(-1248991.9 2382024.5 358581.84)

Not too shabby! bytes consed in lisp means ‘memory allocated’ so we see this only mutating the

vectors already created.

The test is pretty rough and ready however the list is long enough that it wouldn’t just fit in a cache line, and the random calculates are outside the loop as they the slowest part of my original test.

In the process of making these destructive versions of the API I have been going through the majority of the codebase adding type declarations to everything I could. I still have some optimization work and cleanup to do but it’s mostly giving the compiler the information it needs to do it’s job.

Optional typing is fucking great

Nineveh

Nineveh is intended to be a ‘standard library’ of gpu functions and helpers for CEPL. It has code that doesn’t belong in a GL abstraction but is really useful to have. Currently it’s not in quicklisp (the lisp package manager) as it’s still too small & too in flux however progress is being made.

The main addition this week was a draw-tex function which will take any texture or sampler and draw it to the screen. Simple stuff but it has a bunch of options for drawing in certain portions of the screen (top-left, bottom-right etc) scaling, rotation, drawing cubemaps in a nice ‘cross’ layout and maintaining aspect ratios while doing this.

Also Ferris helped me find some (really nasty) bugs in one of the functions in the library which was ace.

PBR

I spent yesterday evening at Ferris’ place where I got a bunch of help with some artifacts I was seeing in my code. It helps so much to have someone who knows what certain things should look like. When it comes to graphics coding I really lack that.

In the process of this I found the previous mentioned bug in nineveh, a bug in my compiler (let wasn’t throwing an error for duplicate var names) and added a way to support uint literals.

This has made me much more confident in the code, and with where the rest of artifacts I’m seeing are probably coming from. HOPEFULLY will have some new screenshots soon.

Sleep

I haven’t been getting enough, so I’m going to bed.

Seeya!

Captain Merge'in

It’s done, finally merged. Hundreds of commits, 15 issues closed, a monster is dead.

The biggest thing I’m noticing right now is how slow the evening goes when you aren’t crunching :D

I was looking at last week’s post to see what I was up to and I realize that although I’ve been feeling like I was going really slow I’ve had 52 commits in the last week which includes the following:

Added a simple api for user’s to be able to inspect the compilation environment in their macros

Common Lisp allows you to access the environment in the macros, however the interface to interact with that object didnt make it into the standard. It was, however, documented so I can still read it [0]. I decided not to implement that api yet though and instead I just made the functions I felt made sense. It will be easy to revisit this when I feel the drive to.

Added ‘declare’

Lisp allows you to declare information about things in your code, whether it is whether a function should be inline or the type of a value, it’s handled through declare

Once again, although it didn’t make it into the standard, extending the kinds of things that can be declared has been thought about and documented. For now I just hooked it into my compile-time-metadata system. A little reading reveals that the proposed system is a rough superset of what I offer so at some point I will implement that and redefine by metadata using it.

Added ‘deftype’

This is one place I left the lisp spec entirely. deftype in lisp is mad, types are seen as sets of objects (makes sense) and they don’t restrict you in how you define that. For example I can say:

;; a function that returns true if given an array where

;; all it's dimensions are of equal length

;;

(defun equidimensional (a)

(or (< (array-rank a) 2)

(apply #'= (array-dimensions a))))

;; the type of all square arrays

;;

(deftype square-matrix (&optional type size)

`(and (array ,type (,size ,size))

(satisfies equidimensional)))

This is super expressive but totally impossible to check at compile time as you can have arbitrary function calls in there. The compilers do a great job on using the flexibility in some cases though, which I won’t yak about now.

In GLSL things are very different, we don’t want to do anything at runtime unless we can help it. We can’t make any types except structs and I already have a nice mechanism for that.

However there are so things that may be interesting.

-

Making a ‘special kind of’ some exisiting type. For example a

rotationtype that is essentially avec3. In the GLSL it will be avec3however by making the type checker see it as a different type we could make it impossible to pass a position as the rotation argument to arotatefunction by accident. I call theseshadowtypes (I probably need to rethink this name :p). -

Making a type that only exists at compile-time for the purpose of holding metadata. This ‘ephemeral’ type never appears in the resulting GLSL and is an advanced feature but can be useful. I use these to represent vector spaces in my code and then use macros to inject code to create the matrices that transform between the spaces.

To make a shadow type I write (deftype rotation () :vec3)

To make an ephemeral type I write (deftype space () ())

In the case of shadow functions I also allow you to easily ‘copy’ functions that are defined for the type you are shadowing. This means you don’t have to redefine addition, subtraction etc for your rotation type, you just copy it from vec3

Mooore Types

One problem with how I used to write lisp is that too often I would use lists when I should have used classes. This has bitten me in the arse one to many times and so I refactored a bunch of stuff to have their own types.

This will help with future refactoring and also as I start working out the ‘final’ public api for my compiler.

Rewrite Space Feature in CEPL

Spaces are a feature I have spoken of before but I will quickly recap. They are a fairly simple idea, you define a scope with a vector space and inside that scope all vectors have to be in that space. If you try and use a vector from another space it will be automatically converted to the correct space before anything else happens. This lowers the chances of messing up your vector math and makes the code more self-documenting.

Previously this was implemented by:

- compiling the code once

- analysing the AST for places that needed conversions

- patching the code with the needed conversions

- recompiling and using the new GLSL

This felt super hacky as all of the process was specific to my compiler. I have now rewritten it using macros, there is much less that will feel alien to a lisper[1] and it all happens in one pass now.

Change the default minify-filter for samplers in CEPL

I thought I had a bug in the textures or samplers in CEPL. I was using the textureLod function in my shaders but no matter what mipmap level I chose nothing would change.

I spent hours looking at the code around mipmaps and finally found out that nothing was wrong, but I had misunderstood how things were meant to work. I had imagined that textureLod allowed you to query the colors from a texture’s mipmaps regardless of the filter settings, whereas actually you need to minify-filter to either linear-mipmap-nearest or linear-mipmap-linear before ANY querying using textureLod is possible.

CEPL defaulted to just linear as it felt the simplest and I didnt want to confuse beginners (irony goes here) but instead I created a behaviour that was surprising and required knowledge to understand. This interests me and I’ll muse over this in future when designing apis.

Bugfixes EVERYWHERE

Just whole bunch of other stuff. Not much to note here other than how quickly trying to make nice errors seems to balloon code.

For example if you are processing 10 things, and one fails, it is easy to detect this in the process_thing function and throw an error. However this means that the user may fix the one thing retry and hit the same error on the next thing. Really we want to aggregate the errors and present an exception saying ‘these 15 things failed for these reasons’. This would give the user more chance of seeing the patterns that are causing problems. This is especially true with syntax error in your code. You don’t want them one at a time, you want them on aggregate in order to see what you are doing wrong.

This also means that your error messages should be able to adjust depending on the number of things that went wrong. For example when 15 things break the ‘these 15 things failed for these reasons’ message is cool, but if only 1 thing broke we want ‘this thing failed for this reason’. These subtle language differences can make a huge difference to how the user feels about the tool they are using. Pluralizing words when there is only one thing sounds dumb and just adds to the annoyance of the tool not working.

I don’t have ideas for a clean solution to this yet, but one day I want something for this.

Sleep

I’m damn tired now. I’m off to bed.

Peace

[0] https://www.cs.cmu.edu/Groups/AI/html/cltl/clm/node102.html [1] and once I have the stuff from [1] it will be even better

Inside the machine

I’ve been back in the compiler this week and it’s just been constant refactoring :p

I kicked of the week with tweaks & bugfixes to the first-class function code. I then started on adding some compile-time metadata to Varjo.

The idea is super basic: We want to associate some data with the values flowing through the shader.

We already have flow-ids, which represent the values in the shader, so we could just add a hashtable that maps these ids to the data. Simple, and it seems to work!

At the very least it will work enough to make some progress on the idea anyhoo. You are able to add, but never remove metadata. Metadata can also be combined, for example:

(let ((x (v! 1 0 0 0)) ;; ← lets assume that both these have metadata

(y (v! 0 1 0 0))) ;; ← with a key called 'foo'

(if something

x

y))

What should be the metadata for the result of the if? Clearly this depends on what the metadata is for. So in the case of conditionals like if or switch we call a method called combine-metadata defined for that metadata type, and it is it’s job to decide the metadata for the new result.

This mechanism is very simple but should be enough to get some useful results.

So with this in place I could dive back into re-implementing my space analysis feature! Progress.. excitement… Ah fuck it, I really need symbol-macros.

Symbol macros are pretty simple:

(let ((x some-object))

(symbol-macrolet ((foo (slot-value x 'foo))) ;; You specify a symbol 'foo' and what it will expand into

(print foo) ;; the compiler then replace every instance of 'foo' in your code with the expansion

(setf foo 20) ;; before it compiles to code

(print foo)))

So what actually ends up getting compiled is:

(let ((x some-object))

(print (slot-value x 'foo))

(setf (slot-value x 'foo) 20)

(print (slot-value x 'foo)))

So that should be simple to implement.

SHOULD BE

Alas when I made the macroexpander[0] for Varjo I was super lazy. I didn’t implement any lexically-scope macros and just expanded everything in a prepass. This really hurt as I suddenly needed to go change how all this was written and mix the expansion into the compile pass.

This refactor took all of Sunday and it was one of those ones where you are changing so much fundamental stuff that you can’t compile for an hour or so at a time. As someone who compiles many times a minute this just left me feeling very unsettled. However when it was done the little test suite I have made me feel fairly confident with the result.

Another nice thing about all this is that my macros will now be able to take the compilation-environment as an argument just like in real common lisp. This means I can provide some of the environment introspection features that are described in CLTL2 but sadly didn’t make it into the standard. This together with metadata make the macros extremely powerful.

Next week

The first order of business is compiler-macros, which should be easy enough. Then I want to add support for &rest (vararg) arguments to the compiler. Then I will get back intothe spaces feature and see how far I can get now.

Peace

[0] back in 2013, holy shit!

Back in the create phase

The other week I was nattering about how I feel I oscillate slowly between needing to create and needed to consume, these last 2 weeks I have definitely been back in the ‘create’ mode. I’m hammering away at the compiler and the new stuff is starting to feel nice. I won’t bother with deep explanations as it’s wouldn’t work in this format, however I have been doing the following:

-

Refactored up how the compiler compiles functions. I certainly can’t say this code is clean yet, but it is at least not lazy. I used to just splice the function code in as a local function and let the code de-duplicator take care of things, this worked well enough for a while but I’ve been fighting it recently so I’m happy to sort this out.

-

Tests! These have been worth their weight in gold this week and I’ve been tidying them up and adding a few more as I debug things.

-

Moved the flow-id to the type: In short I have an ID that is used to work out where values (especially arguments) travel inside functions. When talking to a chap at work about this he mentioned ‘dependent types’ and this stuck with me. Given that this is metadata about the things flowing around, it is the kind of info that could live on a type. I have thus moved this to a field inside the type object and in doing so cleaned up a TONNE of things. This felt great.

-

Started cleaning some of the code used to represent functions from the glsl spec and realized a bunch of it was redundant. Deleting code is heavenly.

-

Deleted more old code related to types (from when I tried to encode the glsl spec’s stupid gentypes directly )

-

fixed assorted bugs

-

found more bugs

-

Started work on supporting the

voidtype. Funnily enough I have not supportedvoidso far in my compiler (except for the main function). The reason was that all code in lisp is an expression, so everything has a return (even if it’snil) to that end I worked to make sure this felt right in Varjo. However this has always left a few places that felt janky so I’m making void work now. This is pretty easy.

I’m basically going to be hammering on more of the same this week. I want to get this working and into master asap. One downside of the above is that in CEPL I had to introduce a hack to keep my spaces feature working. However I realized the other day how to replace all the crazy AST transforms I do in CEPL with a much simpler mechanism that is more general and will live in Varjo. More on this soon.

That’s the lot.

Seeya

Balls!

It’s been a good week for progress.

GL State Cache

The first big thing I achieved was to add GL state caching to CEPL. This has fixed some bugs, avoided some unnecessary state changes and generally made prototyping a bit easier.

For those not familiar that with OpenGL I’ll try explain the whats and whys now. When using OpenGL you are basically working with a crazy vending machine for graphics, you shove what you want draw in one hole, and then flick a bunch of switches and hit go. The switches control ‘how’ it is going to draw. One you have ‘hit go’ it will start rumbling and very soon will have a result. Whilst it is working you can prepare (and start) the next job, setting things up and flicking switches, GL keeps a queue of what it has to go.

The ‘vending machine’ in the above analogy is more formally called the ‘GL state machine’. The state machine has some ‘interesting’ aspects such as:

-

setting some properties on the state machine cause it to have to finish what it was doing before they can be applied, this blocks all queued jobs until that is done. This is really bad for performance

-

querying the properties of the machine is slow. Which means that although changing things can be fast, looking at what you are changing isnt :p

So we want to avoid changing things on the state machine when we can help it, but we can’t afford look at the machine to see if we can avoid changing things.. the solution here is a cache. In the cache we record what we think/know the state of GL is, then when we want to change things we can look at the cache to see if we already have the correct value, and if so, we can avoid requesting the change.

It took a surprisingly long time to get a solution I was happy with in CEPL as I was trying to find a balance between speed and usability. A cache like this can be super useful when debugging as you can look at it and get check your assumptions with the actual state of GL, however working with the raw GL object IDs is (generally) faster. This is also an area where I found the API shaped by the fact I’m in a dynamic programming language, it’s taken some time to find something I’m happy(ish) with.

IBL [0]

After that I went back to trying to implement PBR rendering. I had previously (with help) managed to get point lights working, however I really want to get image based lighting (IBL) in the mix. Now I’ve tried this a few times and have really struggled, partly because the resources available never have a complete example, they are almost always by people with enough rendering experience that they can assume some things to be obvious.. it is almost never obvious to me :p.

This time I went back and attacked this tutorial. Long story short: I don’t believe that the way he is convolving his env maps in the provided code is the way he is doing it in his renders. I made sure that my compiler was producing the EXACT same code as he had and I got completely useless results.

In a fit of desperation I went back onto github searching for any projects that have something working. Many use frameworks like 3js so while they are awesome, they we of limited help. However I did find the following:

-

A 3js project where they have a tonne of the different BRDFs implemented with consistent signatures. This will be a great resource for future learning.

-

A C++ project which has (what looks like) a full implementation of PBR with IBL, using pretty raw DirectX and DXUT (a DirectX equivalent of GLUT). This thing seems to be a perfect resource for me, it’s not an engine, just a project by some person looking to understand a single implementation of this. I’m stoked to be able to read this.

I immediately cloned that C++ project and started reading. They are using the same papers as I have been trying to learn from, however they clearly understood enough to fill in the gaps and (more importantly) pick a selection of approaches that worked together.

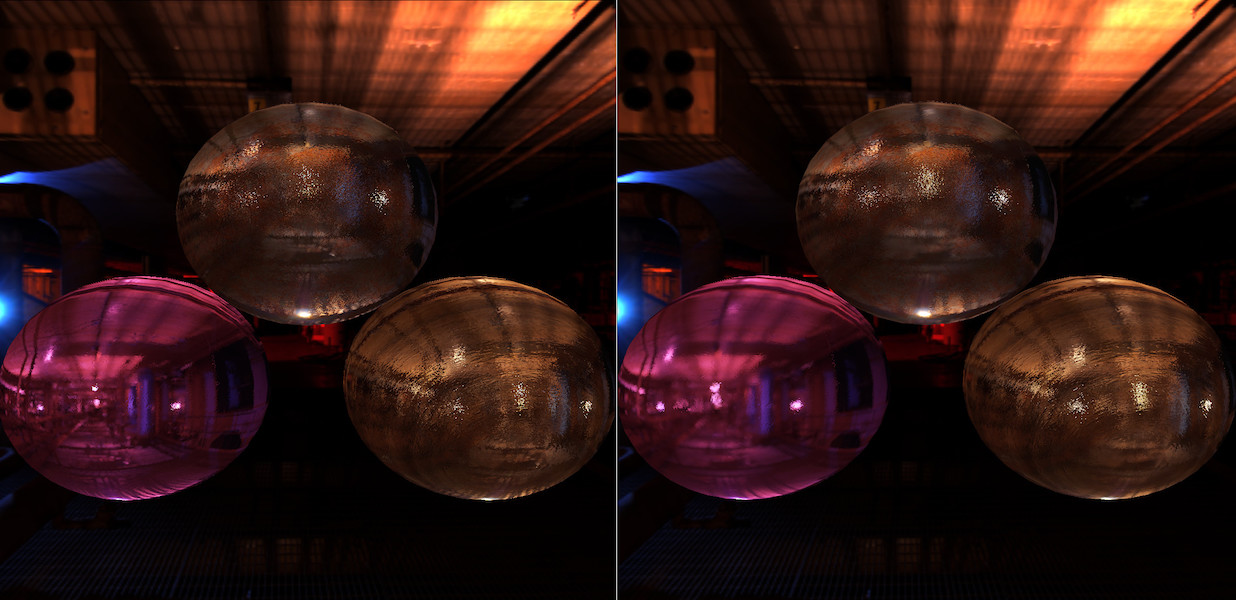

I rewrote my env map convolve shader based on what they had AND IT WORKED! The image below shows the same balls taking light from env maps made for two slightly different roughness values. It’s not much but the fact that you can see that the right version has a slightly blurrier ‘reflection’.

I’m now confident that I will be able to get the rest working. More on this in the coming weeks.

Other Stuff

Around this work a bunch of little things got done including:

In CEPL

- Fix a bunch of GL state bugs using the new cache feature

- Start migrating ugly global CEPL state to the cache

- Fix mipmap size bug in texture code

In Varjo

- Generate easier to read GLSL for symbols like π, φ, θ, etc

- Identify and write tests for a bug in function deduplication (not yet fixed). Basically the way I handle global user defined functions is crappy & I need to fix it.

In Nineveh (A library for useful GPU/Rendering functions)

- Add a function that takes a cubemap texture and makes 1 FBO for each mipmap level where the attachments of the fbo are the cube faces at that mipmap level.

That’s all folks

That’s this week done, I really hope I have something visual to show off next week.

Until then, Peace.

[0] Sorry but I won’t try to explain this section simply yet as I don’t understand it well enough yet.

I'm late!

Woops, I’m late posting this week. I haven’t got too much to show however there has been some progress.

The big piece of work was on the compiler. Let’s say we have this bit of code:

(let ((fn #'sin))

(funcall fn 10))

Which says:

line 1: Let there be a variable named fn which is bound to the function ‘sin’ line 2: Take the value bound to ‘fn’ and call it as a function with the argument ‘10’

Now as of last week this wouldn’t work in my compiler. The reason was that functions in GLSL can have overloads, for example pow can take a float,vex2,vec3 or vec4. So when I said #'sin (get the function named sin) which sin overload was I talking about?

This meant I had to write this:

(let ((fn #'(sin :float)))

(funcall fn 10))

Where #'(sin :float) reads as ‘the function named sin that takes one argument of type float’.

This worked but felt clumsy so my goal was to make the first code example work.

The way I went about it was to say:

When we compile a form like #’xyz where there are not argument types specified, work out all the functions named ‘xyz’ that can be called from that position in the code. Pass this set of functions around with the variable binding information in the compiler an then when the user writes a

funcalllook at the types of the arguments and work out which overload to use.

The nice thing was that the code to pick the most appropriate function for some args from a set of functions already existed, naturally as we need to do this for regular function calls. So that code was generalized a bit and recycled.

The rest took some time as I kept bumping into details that made this more complicated than I had hoped, mostly because my compiler was (and is) written on the fly without any real technical knowledge. I’ve certainly learned stuff that I want to apply to the compiler, but some of these things require large changes to the codebase and I’m not really ready to get into that yet. I have some time constraints as I want to give a talk on this in the near future so I really just need it to work well enough for that.

Other than this I speed-watched a couple of intro unity courses and started reading their docs. The courses were ok’ish I got what I needed from them but I’m hoping that wasnt meant to be good code style as it felt very messy. Time will tell.

That’s all for today, Ciao

Baked

This week I got first class functions into CEPL. It’s not release quality yet but it seems to work which is ace.

What I can now do is define a pipeline that takes a function as a uniform. It compiles this to typecheck it but GLSL doesn’t support first-class functions so the pipeline is marked as partial.

So if I have a gpu function I’m going to use as my fragment shader in some particle system:

(defun-g update-particle-velocities ((tex-coord :vec2)

&uniform (positions :sampler-2d)

(velocities :sampler-2d)

(animator (function (:vec4 :vec4) :vec4)))

(let* ((position (texture positions tex-coord))

(velocity (texture velocities tex-coord)))

;; calc the new velocity

(funcall animator positions velocities)))

I can make a partial pipeline:

(def-g-> update-velocities ()

:vertex (full-screen-quad :vec4)

:fragment (update-particle-velocities :vec2))

If I try and run this CEPL will complain that this is partial. Now let’s make a dumb function that slowly pulls all particles to the origin:

(defun-g origin-sink ((pos :vec4) (vel :vec4))

(let ((dif (- pos)))

(+ velocity (* (normalize dif) (sqrt (length dif)) 0.001))))

And now we can complete the pipeline:

(defvar new-pipeline (bake 'update-velocities :animator '(origin-sink :vec4 :vec4)))

I’m not happy with the syntax yet but the effect is to create a new pipeline with the uniform animator permanently set to our origin-sink function.

We can then map over that new pipeline like any other:

(map-g new-pipeline quad-verts :positions pos-sampler :velocities vel-sampler)

The advantage of this is that I can make a bunch of different ‘animator’ functions and make pipelines that use them just with bake. It still feels odd to me though so It’ll take some time to get used to and cleaned up.

One thing that is interesting me recently is that in livecoding it almost encourages more ‘static’ code. Composition is great for programming but to a degree makes things opaque to the experimenter. If you call a function that returns a function you are sitting in the repl with this function with very little insight into what it is/does. You have to maintain that mapping yourself. It may have something lexically captured, it may not.. I’m not sure where I’m going with this, expect that maybe it would be cool to be able to get the code that made the lambda while at the repl so you can get some context.

Anyhoo, this next week I need to work on the compiler a bit, clean up some of the stuff above & do spend some time studying.

Seeya

This and That

I seem to oscillate slowly between needing to create and needed to consume media. This week I’ve either jumped back to consume or I’m procrastinating.

Either way I’ve not been super productive, let’s start with the consumption:

Nom nom knowledge

-

Listened to Iceberg Slim’s biography - On stage one time Dave Chappele was talking about how the media industry worked and likened it to pimping. He said this book pretty much explained the system. It’s damn depressing.

- Watched some TED talks. Filtering out all the TEDX shit really makes the site more valuable

- craig venter unveils synthetic life - It’s now been 5 years since the first man-made lifeform was booted-up. Fucking incredible work. Everything this guy works on is worth your time

- your brain on communication - FMRIs taken on people being read stories. Very cool way of setting up the tests to reveal the layered nature of brain processing

- scientist_makes_ears_out_of_apples - less on the bleeding edge but very cool. Seems that stripping living things down totheir cellulose structure is possible in a home lab. I’d like to mess with this some day.

- how this fbi strategy is actually creating us based terrorists - Fucking infuriating but relieving to see how much info is available through the court proceedings

- The surprisingly logic minds of babies - I loved this one. Babies can infer based on probabilities. Very cool to see how these tests are constructed and good food for thought when musing on how brains work.

- What really matters at the end of life - Watch this. Is about designing for dying. Very moving, very important, I wish I could say all my family could leave on the terms he describes. Also badass is the fact that the talk is given by a cyborg and that isnt the point of the talk. I love this part of our future.

- The sore problem of prosthetic limbs - How to make comfortable sockets for prosthetic limbs. I’m fairly sure this can be done without the MRI step, which would make it much cheaper and more portable. I’ll have to look into this at some point

- How to read the genome and build a human being - Really cool to see how machine analysis of the geneome can let us predict some characteristics with high accuracy (height to within 5cm for example). A good intro talk

-

Adam Savage’s love letter to cosplay - Passion and community, I loved this talk.

- I’ve also been listening to a book called ‘The Information: A History, a Theory, a Flood’ again. It’s an outstanding walk through our history of understand what information is. From african drums, to morse code, to computers, to genes. This book rules. Read/Listen to it. I’ve been repeating 2 chapters this last week trying to bake them into my brain. The first was on entropy (and how information & entropy are the same), and the second is on genes and how information flows from and through us.

Making stuffs

The first order of business was to look at PBR. Previously I had got deferred point lights to work, however I failed hard at the IBL step. Luckily I rediscovered this tutorial as understood how it fit into what I was doing. Last time I had tried to stick to one paper as (in my ignorance) each approach felt very different to me.

I wrote the shader in lisp and immediately ran into a few bugs in my compiler. This sucked and the fixes I made werent satisfying. The problems were dumb side-effects of how i was doing my flow analysis, I’m pretty sure now that I want to get my compiler-time-values feature finished and then rewrite the code that uses the flow analyzer to use that instead.

I then ran into a few rendering issues. The first turned out to be a bug in my implementation of a function that samples from a cross texture (commonly used for environment maps). The next 2 I haven’t fixed yet:

- the env map filtering pass is super slow

- the resulting env map has horrifying banding

I checked the generated glsl and it looks fine. I’m struggling to work out how I’m screwing this up. I guess it could be that I have a bug in how I’m binding/unbinding textures and this is causing a flush..that could account for the speed…and maybe the graphical fuckups? I don’t know man.

Despite that it feels good to be back in that codebase. One thing that really stood out though was how much first-class functions could make the codebase cleaner and more flexible. I had started that feature partially for fun but more and more it seems it’s going to be very useful.

Given that I spent last night digging into that branch of my compiler. I decided that even without support for closures it would still be a good feature. So I did the following:

- Throw exception on attempts to pass a closure as a value. I’ve tried to make the error message friendly so people get what is happening

- fixed a couple of glsl generation bugs around passing functions as objects

I then spent a little time looking into how to generalize my compile-time-value feature, this will mean I can not only pass around functions but values user defined types. I’m going to use this for vector spaces. I realized that this doesn’t currently have enough power to cover all the things I could do with flow analysis, this is a bummer but at this point I had drunk too much wine to come up with good ideas so I called it a night.

Next week I need to crack the new version of the spaces feature, get that merge in and get back into the PBR.

.. Oh and Christmas :p